Cryptographic Protocols for Server Safety are paramount in today’s digital landscape. Servers, the backbone of online services, face constant threats from malicious actors seeking to exploit vulnerabilities. This exploration delves into the critical role of cryptography in securing servers, examining various protocols, algorithms, and best practices to ensure data integrity, confidentiality, and availability. We’ll dissect symmetric and asymmetric encryption, hashing algorithms, secure communication protocols like TLS/SSL, and key management strategies, alongside advanced techniques like homomorphic encryption and zero-knowledge proofs.

Understanding these safeguards is crucial for building robust and resilient server infrastructure.

From the fundamentals of AES and RSA to the complexities of PKI and mitigating attacks like man-in-the-middle intrusions, we’ll navigate the intricacies of securing server environments. Real-world examples of breaches will highlight the critical importance of implementing strong cryptographic protocols and adhering to best practices. This comprehensive guide aims to equip readers with the knowledge needed to safeguard their servers from the ever-evolving threat landscape.

Introduction to Cryptographic Protocols in Server Security

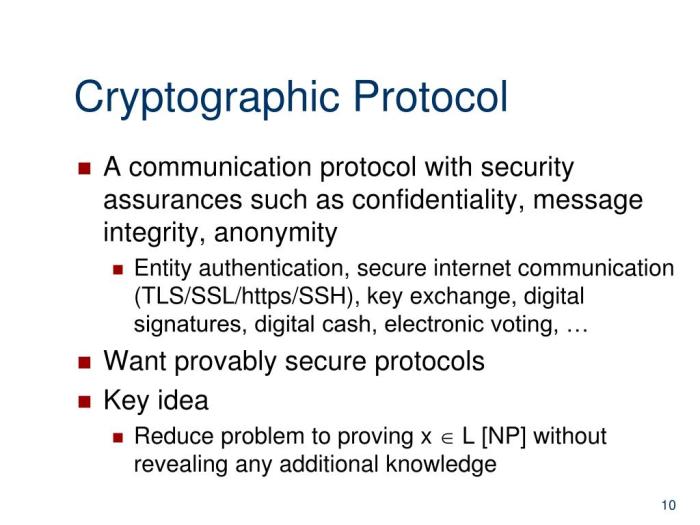

Cryptography forms the bedrock of modern server security, providing the essential tools to protect sensitive data and ensure the integrity and confidentiality of server operations. Without robust cryptographic protocols, servers are vulnerable to a wide range of attacks, potentially leading to data breaches, service disruptions, and significant financial losses. Understanding the fundamental role of cryptography and the types of threats it mitigates is crucial for maintaining a secure server environment.The primary function of cryptography in server security is to protect data at rest and in transit.

This involves employing various techniques to ensure confidentiality (preventing unauthorized access), integrity (guaranteeing data hasn’t been tampered with), authentication (verifying the identity of users and servers), and non-repudiation (preventing denial of actions). These cryptographic techniques are implemented through protocols that govern the secure exchange and processing of information.

Cryptographic Threats to Servers

Servers face a diverse array of threats that exploit weaknesses in cryptographic implementations or protocols. These threats can broadly be categorized into attacks targeting confidentiality, integrity, and authentication. Examples include eavesdropping attacks (where attackers intercept data in transit), man-in-the-middle attacks (where attackers intercept and manipulate communication between two parties), data tampering attacks (where attackers modify data without detection), and impersonation attacks (where attackers masquerade as legitimate users or servers).

The severity of these threats is amplified by the increasing reliance on digital infrastructure and the value of the data stored on servers.

Examples of Server Security Breaches Due to Cryptographic Weaknesses

Several high-profile security breaches highlight the devastating consequences of inadequate cryptographic practices. The Heartbleed vulnerability (2014), affecting OpenSSL, allowed attackers to extract sensitive information from servers, including private keys and user credentials, by exploiting a flaw in the heartbeat extension. This vulnerability demonstrated the catastrophic impact of a single cryptographic weakness, affecting millions of servers worldwide. Similarly, the infamous Equifax breach (2017) resulted from the exploitation of a known vulnerability in the Apache Struts framework, which allowed attackers to gain unauthorized access to sensitive customer data, including social security numbers and credit card information.

The failure to patch known vulnerabilities and implement strong cryptographic controls played a significant role in both these incidents. These real-world examples underscore the critical need for rigorous security practices, including the adoption of strong cryptographic protocols and timely patching of vulnerabilities.

Symmetric-key Cryptography for Server Protection

Symmetric-key cryptography plays a crucial role in securing servers by employing a single, secret key for both encryption and decryption. This approach offers significant performance advantages over asymmetric methods, making it ideal for protecting large volumes of data at rest and in transit. This section will delve into the mechanisms of AES, compare it to other symmetric algorithms, and illustrate its practical application in server security.

Robust cryptographic protocols are crucial for server safety, ensuring data integrity and confidentiality. Understanding the intricacies of these protocols is paramount, and a deep dive into the subject is readily available in this comprehensive guide: Server Security Mastery: Cryptography Essentials. This resource will significantly enhance your ability to implement and maintain secure cryptographic protocols for your servers, ultimately bolstering overall system security.

AES Encryption and Modes of Operation

The Advanced Encryption Standard (AES), a widely adopted symmetric-block cipher, operates by transforming plaintext into ciphertext using a series of mathematical operations. The key length, which can be 128, 192, or 256 bits, determines the complexity and security level. AES’s strength lies in its multiple rounds of substitution, permutation, and mixing operations, making it computationally infeasible to break with current technology for appropriately sized keys.

The choice of operating mode significantly impacts the security and functionality of AES in a server environment. Different modes handle data differently and offer varying levels of protection against various attacks.

- Electronic Codebook (ECB): ECB mode encrypts identical blocks of plaintext into identical blocks of ciphertext. This predictability makes it vulnerable to attacks and is generally unsuitable for securing server data, especially where patterns might exist.

- Cipher Block Chaining (CBC): CBC mode introduces an Initialization Vector (IV) and chains each ciphertext block to the previous one, preventing identical plaintext blocks from producing identical ciphertext. This significantly enhances security compared to ECB. The IV must be unique for each encryption operation.

- Counter (CTR): CTR mode generates a unique counter value for each block, which is then encrypted with the key. This allows for parallel encryption and decryption, offering performance benefits in high-throughput server environments. The counter and IV must be unique and unpredictable.

- Galois/Counter Mode (GCM): GCM combines CTR mode with a Galois field authentication tag, providing both confidentiality and authenticated encryption. This is a preferred mode for server applications requiring both data integrity and confidentiality, mitigating risks associated with manipulation and unauthorized access.

Comparison of AES with 3DES and Blowfish

While AES is the dominant symmetric-key algorithm today, other algorithms like 3DES (Triple DES) and Blowfish have been used extensively. Comparing them reveals their relative strengths and weaknesses in the context of server security.

| Algorithm | Key Size (bits) | Block Size (bits) | Strengths | Weaknesses |

|---|---|---|---|---|

| AES | 128, 192, 256 | 128 | High security, efficient implementation, widely supported | Requires careful key management |

| 3DES | 168, 112 | 64 | Widely supported, relatively mature | Slower than AES, shorter effective key length than AES-128 |

| Blowfish | 32-448 | 64 | Flexible key size, relatively fast | Older algorithm, less widely scrutinized than AES |

AES Implementation Scenario: Securing Server Data

Consider a web server storing user data in a database. To secure data at rest, the server can encrypt the database files using AES-256 in GCM mode. A strong, randomly generated key is stored securely, perhaps using a hardware security module (HSM) or key management system. Before accessing data, the server decrypts the files using the same key and mode.

For data in transit, the server can use AES-128 in GCM mode to encrypt communication between the server and clients using HTTPS. This ensures confidentiality and integrity of data transmitted over the network. The specific key used for in-transit encryption can be different from the key used for data at rest, enhancing security by compartmentalizing risk. This layered approach, combining encryption at rest and in transit, provides a robust security posture for sensitive server data.

Asymmetric-key Cryptography and its Applications in Server Security

Asymmetric-key cryptography, also known as public-key cryptography, forms a cornerstone of modern server security. Unlike symmetric-key cryptography, which relies on a single secret key shared between parties, asymmetric cryptography utilizes a pair of keys: a public key, freely distributed, and a private key, kept secret by the owner. This key pair allows for secure communication and authentication in scenarios where sharing a secret key is impractical or insecure.Asymmetric encryption offers several advantages for server security, including the ability to securely establish shared secrets over an insecure channel, authenticate server identity, and ensure data integrity.

This section will explore the application of RSA and Elliptic Curve Cryptography (ECC) within server security contexts.

RSA for Securing Server Communications and Authentication

The RSA algorithm, named after its inventors Rivest, Shamir, and Adleman, is a widely used asymmetric encryption algorithm. In server security, RSA plays a crucial role in securing communications and authenticating server identity. The server generates an RSA key pair, keeping the private key secret and publishing the public key. Clients can then use the server’s public key to encrypt messages intended for the server, ensuring only the server, possessing the corresponding private key, can decrypt them.

This prevents eavesdropping and ensures confidentiality. Furthermore, digital certificates, often based on RSA, bind a server’s public key to its identity, allowing clients to verify the server’s authenticity before establishing a secure connection. This prevents man-in-the-middle attacks where a malicious actor impersonates the legitimate server.

Digital Signatures and Data Integrity in Server-Client Interactions

Digital signatures, enabled by asymmetric cryptography, are critical for ensuring data integrity and authenticity in server-client interactions. A server can use its private key to generate a digital signature for a message, which can then be verified by the client using the server’s public key. The digital signature acts as a cryptographic fingerprint of the message, guaranteeing that the message hasn’t been tampered with during transit and confirming the message originated from the server possessing the corresponding private key.

This is essential for secure software updates, code signing, and secure transactions where data integrity and authenticity are paramount. A compromised digital signature would immediately indicate tampering or forgery.

Comparison of RSA and ECC

RSA and Elliptic Curve Cryptography (ECC) are both widely used asymmetric encryption algorithms, but they differ significantly in their performance characteristics and security levels for equivalent key sizes. ECC generally offers superior performance and security for the same key size compared to RSA.

| Algorithm | Key Size (bits) | Performance | Security |

|---|---|---|---|

| RSA | 2048-4096 | Relatively slower, especially for encryption/decryption | Strong, but requires larger key sizes for equivalent security to ECC |

| ECC | 256-521 | Faster than RSA for equivalent security levels | Strong, offers comparable or superior security to RSA with smaller key sizes |

The smaller key sizes required by ECC translate to faster computation, reduced bandwidth consumption, and lower energy requirements, making it particularly suitable for resource-constrained devices and applications where performance is critical. While both algorithms provide strong security, ECC’s efficiency advantage makes it increasingly preferred in many server security applications, particularly in mobile and embedded systems.

Hashing Algorithms and their Importance in Server Security

Hashing algorithms are fundamental to server security, providing crucial mechanisms for data integrity verification, password protection, and digital signature generation. These algorithms transform data of arbitrary size into a fixed-size string of characters, known as a hash. The security of these processes relies heavily on the cryptographic properties of the hashing algorithm employed.

The strength of a hashing algorithm hinges on several key properties. A secure hash function must exhibit collision resistance, pre-image resistance, and second pre-image resistance. Collision resistance means it’s computationally infeasible to find two different inputs that produce the same hash value. Pre-image resistance ensures that given a hash value, it’s practically impossible to determine the original input.

Second pre-image resistance guarantees that given an input and its corresponding hash, finding a different input that produces the same hash is computationally infeasible.

SHA-256, SHA-3, and MD5: A Comparison

SHA-256, SHA-3, and MD5 are prominent examples of hashing algorithms, each with its strengths and weaknesses. SHA-256 (Secure Hash Algorithm 256-bit) is a widely used member of the SHA-2 family, offering robust security against known attacks. SHA-3 (Secure Hash Algorithm 3), designed with a different underlying structure than SHA-2, provides an alternative with strong collision resistance. MD5 (Message Digest Algorithm 5), while historically significant, is now considered cryptographically broken due to vulnerabilities making collision finding relatively easy.

SHA-256’s strength lies in its proven resilience against various attack methods, making it a suitable choice for many security applications. However, future advancements in computing power might eventually compromise its security. SHA-3’s design offers a different approach to hashing, providing a strong alternative and mitigating potential vulnerabilities that might affect SHA-2. MD5’s susceptibility to collision attacks renders it unsuitable for security-sensitive applications where collision resistance is paramount.

Its use should be avoided entirely in modern systems.

Hashing for Password Storage

Storing passwords directly in a database is a significant security risk. Instead, hashing is employed to protect user credentials. When a user registers, their password is hashed using a strong algorithm like bcrypt or Argon2, which incorporate features like salt and adaptive cost factors to increase security. Upon login, the entered password is hashed using the same algorithm and salt, and the resulting hash is compared to the stored hash.

A match indicates successful authentication without ever exposing the actual password. This approach significantly mitigates the risk of data breaches exposing plain-text passwords.

Hashing for Data Integrity Checks

Hashing ensures data integrity by generating a hash of a file or data set. This hash acts as a fingerprint. If the data is modified, even slightly, the resulting hash will change. By storing the hash alongside the data, servers can verify data integrity by recalculating the hash and comparing it to the stored value. Any discrepancy indicates data corruption or tampering.

This is commonly used for software updates, ensuring that downloaded files haven’t been altered during transmission.

Hashing in Digital Signatures

Digital signatures rely on hashing to ensure both authenticity and integrity. A document is hashed, and the resulting hash is then encrypted using the sender’s private key. The encrypted hash, along with the original document, is sent to the recipient. The recipient uses the sender’s public key to decrypt the hash and then generates a hash of the received document.

Matching hashes confirm that the document hasn’t been tampered with and originated from the claimed sender. This is crucial for secure communication and transaction verification in server environments.

Secure Communication Protocols (TLS/SSL)

Transport Layer Security (TLS) and its predecessor, Secure Sockets Layer (SSL), are cryptographic protocols designed to provide secure communication over a network. They are essential for protecting sensitive data transmitted between a client (like a web browser) and a server (like a website). This section details the handshake process, the role of certificates and PKI, and common vulnerabilities and mitigation strategies.

The primary function of TLS/SSL is to establish a secure connection by encrypting the data exchanged between the client and the server. This prevents eavesdropping and tampering with the communication. It achieves this through a series of steps known as the handshake process, which involves key exchange, authentication, and cipher suite negotiation.

The TLS/SSL Handshake Process

The TLS/SSL handshake is a complex process, but it can be summarized in several key steps. Initially, the client initiates the connection by sending a “ClientHello” message to the server. This message includes details such as the supported cipher suites (combinations of encryption algorithms and hashing algorithms), the client’s preferred protocol version, and a randomly generated number called the client random.

The server responds with a “ServerHello” message, acknowledging the connection and selecting a cipher suite from those offered by the client. It also includes a server random number. Next, the server sends its certificate, which contains its public key and is digitally signed by a trusted Certificate Authority (CA). The client verifies the certificate’s validity and extracts the server’s public key.

Using the client random, server random, and the server’s public key, a pre-master secret is generated and exchanged securely. This pre-master secret is then used to derive session keys for encryption and decryption. Finally, the client and server confirm the connection using a change cipher spec message, after which all further communication is encrypted.

The Role of Certificates and Public Key Infrastructure (PKI)

Digital certificates are fundamental to the security of TLS/SSL connections. A certificate is a digitally signed document that binds a public key to an identity (e.g., a website). It assures the client that it is communicating with the intended server and not an imposter. Public Key Infrastructure (PKI) is a system of digital certificates, Certificate Authorities (CAs), and registration authorities that manage and issue these certificates.

CAs are trusted third-party organizations that verify the identity of the entities requesting certificates and digitally sign them. The client’s trust in the server’s certificate is based on the client’s trust in the CA that issued the certificate. If the client’s operating system or browser trusts the CA, it will accept the server’s certificate as valid. This chain of trust is crucial for ensuring the authenticity of the server.

Common TLS/SSL Vulnerabilities and Mitigation Strategies

Despite its robust design, TLS/SSL implementations can be vulnerable to various attacks. One common vulnerability is the use of weak or outdated cipher suites. Using strong, modern cipher suites with forward secrecy (ensuring that compromise of long-term keys does not compromise past sessions) is crucial. Another vulnerability stems from improper certificate management, such as using self-signed certificates in production environments or failing to revoke compromised certificates promptly.

Regular certificate renewal and robust certificate lifecycle management are essential mitigation strategies. Furthermore, vulnerabilities in server-side software can lead to attacks like POODLE (Padding Oracle On Downgraded Legacy Encryption) and BEAST (Browser Exploit Against SSL/TLS). Regular software updates and patching are necessary to address these vulnerabilities. Finally, attacks such as Heartbleed exploit vulnerabilities in the implementation of the TLS/SSL protocol itself, highlighting the importance of using well-vetted and thoroughly tested libraries and implementations.

Implementing strong logging and monitoring practices can also help detect and respond to attacks quickly.

Implementing Secure Key Management Practices

Effective key management is paramount for maintaining the confidentiality, integrity, and availability of server data. Compromised cryptographic keys represent a significant vulnerability, potentially leading to data breaches, unauthorized access, and service disruptions. Robust key management practices encompass secure key generation, storage, and lifecycle management, minimizing the risk of exposure and ensuring ongoing security.Secure key generation involves using cryptographically secure pseudorandom number generators (CSPRNGs) to create keys of sufficient length and entropy.

Weak or predictable keys are easily cracked, rendering cryptographic protection useless. Keys should also be generated in a manner that prevents tampering or modification during the generation process. This often involves dedicated hardware security modules (HSMs) or secure key generation environments.

Key Storage and Protection

Storing cryptographic keys securely is crucial to prevent unauthorized access. Best practices advocate for storing keys in hardware security modules (HSMs), which offer tamper-resistant environments specifically designed for protecting sensitive data, including cryptographic keys. HSMs provide physical and logical security measures to safeguard keys from unauthorized access or modification. Alternatively, keys can be encrypted and stored in a secure file system with restricted access permissions, using strong encryption algorithms and robust access control mechanisms.

Regular audits of key access logs are essential to detect and prevent unauthorized key usage. The principle of least privilege should be strictly enforced, limiting access to keys only to authorized personnel and systems.

Key Rotation and Lifecycle Management

Regular key rotation is a critical security measure to mitigate the risk of long-term key compromise. If a key is compromised, the damage is limited to the period it was in use. Key rotation involves regularly generating new keys and replacing old ones. The frequency of rotation depends on the sensitivity of the data being protected and the risk assessment.

A well-defined key lifecycle management process includes key generation, storage, usage, rotation, and ultimately, secure key destruction. This process should be documented and regularly reviewed to ensure its effectiveness. Automated key rotation mechanisms can streamline this process and reduce the risk of human error.

Common Key Management Vulnerabilities and Their Impact

Proper key management practices are vital in preventing several security risks. Neglecting these practices can lead to severe consequences.

- Weak Key Generation: Using predictable or easily guessable keys significantly weakens the security of the system, making it vulnerable to brute-force attacks or other forms of cryptanalysis. This can lead to complete compromise of encrypted data.

- Insecure Key Storage: Storing keys in easily accessible locations, such as unencrypted files or databases with weak access controls, makes them susceptible to theft or unauthorized access. This can result in data breaches and unauthorized system access.

- Lack of Key Rotation: Failure to regularly rotate keys increases the window of vulnerability if a key is compromised. A compromised key can be used indefinitely to access sensitive data, leading to prolonged exposure and significant damage.

- Insufficient Key Access Control: Allowing excessive access to cryptographic keys increases the risk of unauthorized access or misuse. This can lead to data breaches and system compromise.

- Improper Key Destruction: Failing to securely destroy keys when they are no longer needed leaves them vulnerable to recovery and misuse. This can result in continued exposure of sensitive data even after the key’s intended lifecycle has ended.

Advanced Cryptographic Techniques for Enhanced Server Security

Beyond the foundational cryptographic methods, advanced techniques offer significantly enhanced security for servers handling sensitive data. These techniques address complex scenarios requiring stronger privacy guarantees and more robust security against sophisticated attacks. This section explores three such techniques: homomorphic encryption, zero-knowledge proofs, and multi-party computation.

Homomorphic Encryption for Computation on Encrypted Data

Homomorphic encryption allows computations to be performed on encrypted data without the need for decryption. This is crucial for scenarios where sensitive data must be processed by a third party without revealing the underlying information. For example, a cloud service provider could process encrypted medical records to identify trends without ever accessing the patients’ private health data. There are several types of homomorphic encryption, including partially homomorphic encryption (PHE), somewhat homomorphic encryption (SHE), and fully homomorphic encryption (FHE).

PHE supports only a limited set of operations, while SHE allows a limited number of operations before the encryption scheme breaks down. FHE, the most powerful type, allows for arbitrary computations on encrypted data. However, FHE schemes are currently computationally expensive and less practical for widespread deployment compared to PHE or SHE. The choice of homomorphic encryption scheme depends on the specific computational needs and the acceptable level of complexity.

Zero-Knowledge Proofs for Server Authentication and Authorization

Zero-knowledge proofs (ZKPs) allow a prover to demonstrate the truth of a statement to a verifier without revealing any information beyond the validity of the statement itself. In server security, ZKPs can be used for authentication and authorization. For instance, a user could prove their identity to a server without revealing their password. This is achieved by employing cryptographic protocols that allow the user to demonstrate possession of a secret (like a password or private key) without actually transmitting it.

A common example is the Schnorr protocol, which allows for efficient and secure authentication. The use of ZKPs enhances security by minimizing the exposure of sensitive credentials, making it significantly more difficult for attackers to steal or compromise them.

Multi-Party Computation for Secure Computations Involving Multiple Servers

Multi-party computation (MPC) enables multiple parties to jointly compute a function over their private inputs without revealing anything beyond the output. This is particularly useful in scenarios where multiple servers need to collaborate on a computation without sharing their individual data. Imagine a scenario where several banks need to jointly calculate a risk score based on their individual customer data without revealing the data itself.

MPC allows for this secure computation. Various techniques are used in MPC, including secret sharing and homomorphic encryption. Secret sharing involves splitting a secret into multiple shares, distributed among the participating parties. Reconstruction of the secret requires the contribution of all shares, preventing any single party from accessing the complete information. MPC is becoming increasingly important in areas requiring secure collaborative processing of sensitive information, such as financial transactions and medical data analysis.

Addressing Cryptographic Attacks on Servers

Cryptographic protocols, while designed to enhance server security, are not impervious to attacks. Understanding common attack vectors is crucial for implementing robust security measures. This section details several prevalent cryptographic attacks targeting servers, outlining their mechanisms and potential impact.

Man-in-the-Middle Attacks

Man-in-the-middle (MitM) attacks involve an attacker secretly relaying and altering communication between two parties who believe they are directly communicating with each other. The attacker intercepts messages from both parties, potentially modifying them before forwarding them. This compromise can lead to data breaches, credential theft, and the injection of malicious code.

Replay Attacks

Replay attacks involve an attacker intercepting a legitimate communication and subsequently retransmitting it to achieve unauthorized access or action. This is particularly effective against systems that do not employ mechanisms to detect repeated messages. For instance, an attacker could capture a valid authentication request and replay it to gain unauthorized access to a server. The success of a replay attack hinges on the lack of adequate timestamping or sequence numbering in the communication protocol.

Denial-of-Service Attacks, Cryptographic Protocols for Server Safety

Denial-of-service (DoS) attacks aim to make a server or network resource unavailable to its intended users. Cryptographic vulnerabilities can be exploited to amplify the effectiveness of these attacks. For example, a computationally intensive cryptographic operation could be targeted, overwhelming the server’s resources and rendering it unresponsive to legitimate requests. Distributed denial-of-service (DDoS) attacks, leveraging multiple compromised machines, significantly exacerbate this problem.

A common approach is flooding the server with a large volume of requests, making it difficult to handle legitimate traffic. Another approach involves exploiting vulnerabilities in the server’s cryptographic implementation to exhaust resources.

Illustrative Example: Man-in-the-Middle Attack

Consider a client (Alice) attempting to securely connect to a server (Bob) using HTTPS. An attacker (Mallory) positions themselves between Alice and Bob.“`

- Alice initiates a connection to Bob.

- Mallory intercepts the connection request.

- Mallory establishes separate connections with Alice and Bob.

- Mallory relays messages between Alice and Bob, potentially modifying them.

- Alice and Bob believe they are communicating directly, unaware of Mallory’s interception.

- Mallory gains access to sensitive data exchanged between Alice and Bob.

“`This illustrates how a MitM attack can compromise the confidentiality and integrity of the communication. The attacker can intercept, modify, and even inject malicious content into the communication stream without either Alice or Bob being aware of their presence. The effectiveness of this attack relies on Mallory’s ability to intercept and control the communication channel. Robust security measures, such as strong encryption and digital certificates, help mitigate this risk, but vigilance remains crucial.

Last Recap

Securing servers effectively requires a multi-layered approach leveraging robust cryptographic protocols. This exploration has highlighted the vital role of symmetric and asymmetric encryption, hashing algorithms, and secure communication protocols in protecting sensitive data and ensuring the integrity of server operations. By understanding the strengths and weaknesses of various cryptographic techniques, implementing secure key management practices, and proactively mitigating common attacks, organizations can significantly bolster their server security posture.

The ongoing evolution of cryptographic threats necessitates continuous vigilance and adaptation to maintain a strong defense against cyberattacks.

Q&A: Cryptographic Protocols For Server Safety

What is the difference between symmetric and asymmetric encryption?

Symmetric encryption uses the same key for both encryption and decryption, offering faster speeds but requiring secure key exchange. Asymmetric encryption uses separate public and private keys, simplifying key exchange but being slower.

How often should cryptographic keys be rotated?

Key rotation frequency depends on the sensitivity of the data and the risk level, but regular rotation (e.g., every 6-12 months) is generally recommended.

What are some common vulnerabilities in TLS/SSL implementations?

Common vulnerabilities include weak cipher suites, certificate mismanagement, and insecure configurations. Regular updates and security audits are essential.

What is a digital signature and how does it enhance server security?

A digital signature uses asymmetric cryptography to verify the authenticity and integrity of data. It ensures that data hasn’t been tampered with and originates from a trusted source.